Active4 years ago

I have really large file with approximately 15 million entries.Each line in the file contains a single string (call it key).

Find duplicate photos on your PC or Mac with Easy Duplicate Finder. Finding Duplicate Files Search for duplicate photos of all format types and on all media types quickly & accurately. Find and Manage Duplicate Photos. Do you love taking photos? It's great to have a large photo collection. But it's not all that great when it's full of.

I need to find the duplicate entries in the file using java.I tried to use a hashmap and detect duplicate entries.Apparently that approach is throwing me a 'java.lang.OutOfMemoryError: Java heap space' error.

How can I solve this problem?

I think I could increase the heap space and try it, but I wanted to know if there are better efficient solutions without having to tweak the heap space.

Eduard Wirch7,80388 gold badges5454 silver badges6565 bronze badges

MaximusMaximus38611 gold badge44 silver badges1919 bronze badges

7 Answers

The key is that your data will not fit into memory. You can use external merge sort for this:

Partition your file into multiple smaller chunks that fit into memory. Sort each chunk, eliminate the duplicates (now neighboring elements).

Merge the chunks and again eliminate the duplicates when merging. Since you will have an n-nway merge here you can keep the next k elements from each chunk in memory, once the items for a chunk are depleted (they have been merged already) grab more from disk.

BrokenGlassBrokenGlass139k2323 gold badges255255 silver badges301301 bronze badges

I'm not sure if you'd consider doing this outside of java, but if so, this is very simple in a shell:

MichaelMichael4,71911 gold badge1515 silver badges2121 bronze badges

You probably can't load the entire file at one time but you can store the hash and line-number in a HashSet no problem.

Pseudo code...

Andrew WhiteAndrew White40.9k1515 gold badges9797 silver badges130130 bronze badges

I don't think you need to sort the data to eliminate duplicates. Just use quicksort inspired approach.

- Pick k pivots from the data (unless your data is really wacky this should be pretty straightforward )

- Using these k pivots divide the data into k+1 small files

- If any of these chunks are too large to fit in memory repeat the process just for that chunk

- Once you have manageable sized chunks just apply your favorite method (hashing?) to find duplicates

Note that k can be equal to 1.

ElKaminaElKamina

One way I can imagine solving this is to first use an external sorting algorithm to sort the file (searching for

DarkDustDarkDustexternal sort java yields lots of results with code). Then you can iterate the file line by line, duplicates will now obviously be directly following each other so you only need to remember the previous line while iterating.79.8k1515 gold badges169169 silver badges204204 bronze badges

If you cannot build up a complete list since you don't have enough memory, you might try do it in loops. I.e. create a hashmap but only store a small portion of the items (for example, those starting with A). Then you gather the duplicates, then continue with 'B' etc.

Of course you can select any kind of 'grouping' (i.e. first 3 characters, first 6 etc).

It only will take (many) more iterations.

Michel KeijzersMichel Keijzers10.5k2222 gold badges7676 silver badges105105 bronze badges

You might try a Bloom filter, if you're willing to accept a certain amount of statistical error. Guava provides one, but there's a pretty major bug in it right now that should be fixed probably next week with release 11.0.2.

Louis Wasserman

152k2121 gold badges272272 silver badges335335 bronze badges

Not the answer you're looking for? Browse other questions tagged algorithmdata-structures or ask your own question.

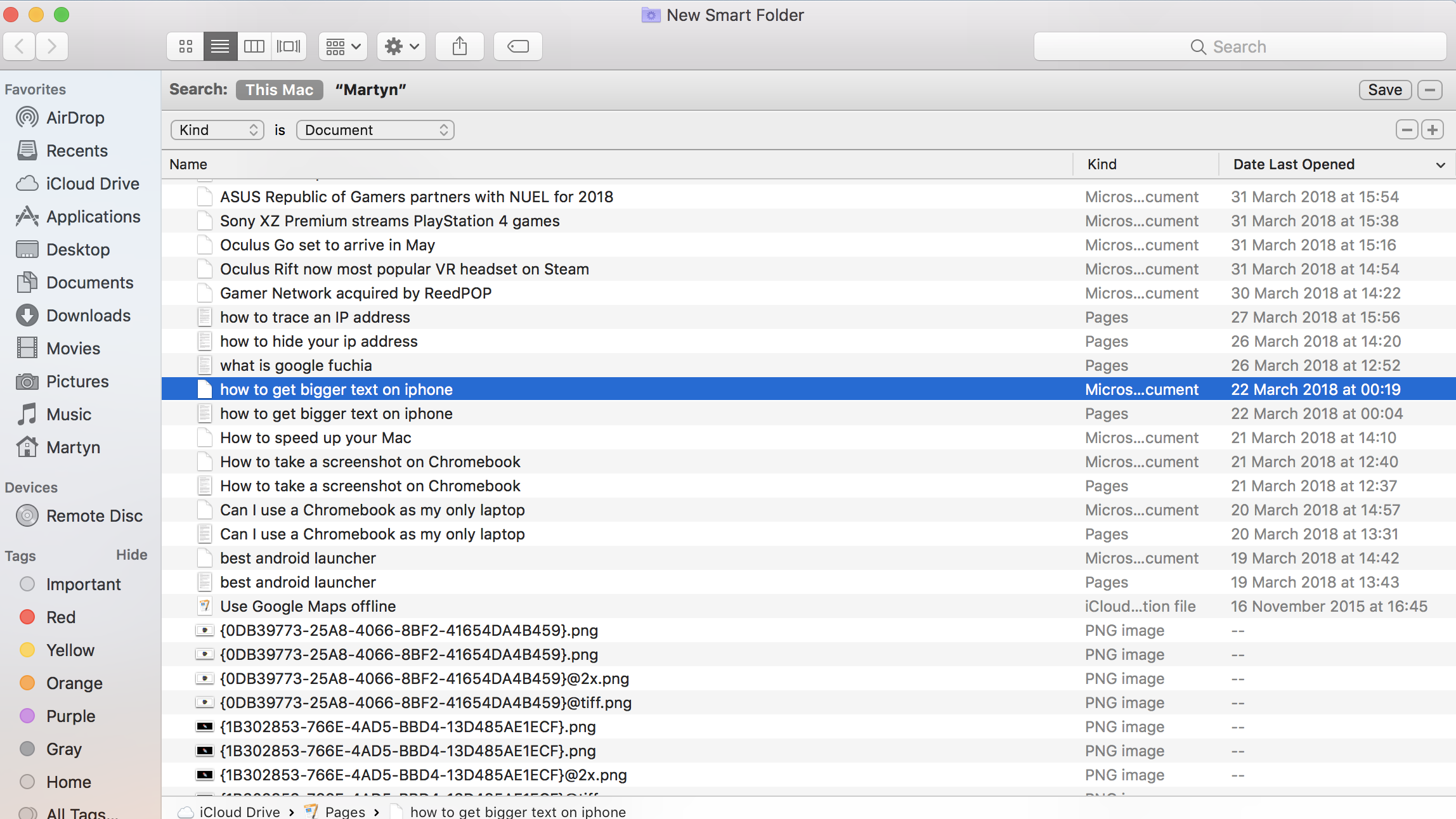

Duplicate files are a waste of disk space, consuming that precious SSD space on a modern Mac and cluttering your Time Machine backups. Remove them to free up space on your Mac.

There are many polished Mac apps for this — but they’re mostly paid software. Those shiny apps in the Mac app store will probably work well, but we have some good options if you don’t want to whip out your credit card.

Gemini and Other Paid Apps

If you do want to spend money on a duplicate-file-finder app, Gemini looks like one of the best options with the slickest interfaces. The trial version worked well for us, and the interface certainly stands out from barebones, free applications like dupeGuru. Gemini can also scan your iTunes and iPhoto library for duplicates. If you’re willing to pay $10 for a better interface, Gemini seems like a good bet.

There are other, similarly polished duplicate-file-finders in the Mac App Store, too — but Apple flags this one as an Editors’ Choice, and we can see why.

As a bonus, the demo version of Gemini allows you to search for and find duplicates, but not remove them. So, if you really wanted, you could use the demo to find duplicates on your Mac, locate them in Finder, and then remove them by hand. Other paid duplicate-file-finder apps have demos that function in a similar way, so this may be convenient if you just want to run an occasional scan and you don’t mind deleting a handful of duplicates by hand.

There are many good-quality, paid duplicate-file-finding apps for Mac. You can find them with a quick trip to the Mac App Store.

dupeGuru, dupeGuru Music Edition, and dupeGuru Pictures Edition

RELATED:10 Ways To Free Up Disk Space on Your Mac Hard Drive

We also recommended dupeGuru for finding duplicate files on Windows. This application is both open-source and cross-platform. It’s simple to use — open the application, add one or more folders to scan, and click Scan. You’ll see a list of duplicate files, and you can select them and easily move them to the Trash or another folder. You can also preview them, verifying that they actually are duplicates before tossing them away.

dupeGuru is available in three different flavors — a standard edition, an edition designed for finding duplicate music files, and an edition designed for finding duplicate pictures. These tools won’t just find exact duplicates, but should find the same songs encoded at different bitrates and the same picture resized, rotated, or edited.

This application is utilitarian, but it does its job well. You don’t get the shiny interface that you do with the paid Mac apps, but it’s a good free tool for finding and clearing duplicate files. If you want a free application for finding and removing duplicate files on a Mac, this is the one to use.

iTunes

iTunes has a built-in feature that can find duplicate music and video files in your iTunes library. It won’t help with other types of files or media files not in iTunes, but it can be a quick way to free up some space if you have a big media library with duplicate files.

To use this feature, open iTunes, click the View menu, and select Show Duplicate Items. You can also hold the Option key on your keyboard and then click the Show Exact Duplicate Items link. This will only show duplicates with the same exact name, artist, and album.

After you click this, iTunes will show you a sorted list of duplicates next to each other. You can go through the list and delete any duplicates from your computer if they actually are duplicates you want to delete. When you’re done, click View > Show All Items to get back to the default list of media.

That’s it? Yup, that’s it. We didn’t want to recommend potentially confusing Terminal commands that output a list of duplicates to a text file, awkward methods that involve scrolling through a list of all the files on your Mac in the Finder, or applications that require disabling the Mac’s Gatekeeper feature to run untrusted binaries. The tools above will do the job, whether you want a barebones-and-free utility or a polished-but-paid application.

READ NEXT- › Wi-Fi vs. ZigBee and Z-Wave: Which Is Better?

- › What Does “FWIW” Mean, and How Do You Use It?

- › How to Automatically Delete Your YouTube History

- › What Is “Mixed Content,” and Why Is Chrome Blocking It?

- › How to Manage Multiple Mailboxes in Outlook